Confluence for 2.8.26

Anthropic releases Claude Opus 4.6. Claude in PowerPoint arrives. Tolerating ambiguity and working with AI. What people will let AI do.

Welcome to Confluence. It was a big week in the world of generative AI. Here’s what has our attention at the intersection of generative AI, leadership, and corporate communication:

Anthropic Releases Claude Opus 4.6

Claude in PowerPoint Arrives

Tolerating Ambiguity and Working With AI

What People Will Let AI Do

Anthropic Releases Claude Opus 4.6

And it can just do things.

Anthropic released Claude Opus 4.6 on Thursday. As the name and numbering convention suggests, it is an incremental yet meaningful improvement over Opus 4.5. We’ve already had a few small moments that feel smarter, such as when this Confluence writer inadvertently repeated a question from a few months back and Opus 4.6 began its response with “We covered this back in November.” The type of reply we’d expect from an exasperated colleague, not an LLM.

From a technical perspective, the improvement that matters most is in long-context retrieval and reasoning. Opus 4.6 is the first Opus-class model to support a one-million-token context window, meaning context rot (which we’ve written about in the past) becomes significantly less of an issue. The model does more, with more context, over a longer task horizon than its predecessors, and makes fewer mistakes in the process. This opens the door to generative AI completing more complex knowledge work than previously possible, including the elusive ability to create and edit PowerPoint slides directly within the application (more on that below).

If you’re working with Opus 4.6 (and if you’re not, you should experiment with it on a personal account), the real lesson is that leading models can increasingly just do things. And things we wouldn’t have considered attempting before. About an hour after the release, we had a previously scheduled conversation within our firm about generative AI. To illustrate the model’s capabilities, we gave Opus 4.6 our colleague directory and asked it to create an interactive map of where our colleagues and offices are based, pulling in headshots and links to professional bios from the web. It blew us away. The shortcomings were minor: colleague locations were often off by a few blocks, and some formatting needed polish. But the fact that a working, visually compelling prototype existed within minutes of a casual prompt is the point. And we did this in Claude’s standard web app. Users can accomplish even more through Claude Code, which handles longer and more complex tasks, or through direct integrations like Claude in PowerPoint and Excel.

Our advice: spend time with Opus 4.6 just asking it to do things for fun. Maps, interactive tools, visualizations, whatever comes to mind. It won’t get everything perfect, but the exercise will give you a better sense of where the frontier actually is. We expect it’s further along than most people realize. Increasingly, the bottleneck for knowledge work won’t be execution capability. It will be the quality of our ideas and the specificity of our asks.

Claude in PowerPoint Arrives

If this is the worst PowerPoint AI you’ll use for the rest of your life, the future looks bright.

We have mentioned a few times Anthropic’s plugin for Excel, Claude in Excel, which is impressing many folks in financial services with its ability to build, work in, and edit MS Excel worksheets. And these are not trivial use cases. People are using it for sophisticated financial modeling and more.

But the thing many of our readers have been waiting for is an AI model that can function at a high level inside PowerPoint, and three days ago that arrived in Anthropic’s Claude in PowerPoint. (It actually dropped last Thursday in the middle of our firm’s all-firm meetings, and although it reinforces consultant stereotypes, several members of our firm were already testing it within minutes of its announcement — such is the desire to NOT spend time making slides in our firm.)

It works as a plugin inside PowerPoint itself, in a sidebar where you chat with Claude, give direction, ask for edits, etc.:

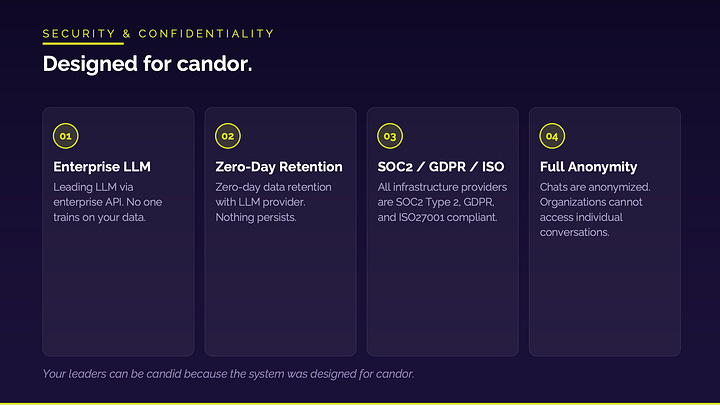

And first reactions? Impressive. Certainly very helpful. Here are two examples. The first is a PowerPoint created from a one-page marketing sheet for our AI coach, ALEX. We first uploaded our firm’s PowerPoint template, and then gave the one-pager to Claude along with this prompt: This is the firm’s PPT template. Attached is our one-pager on ALEX. Make an amazing, incredible deck from the file. Really blow me away. Channel your inner Duarte. DO NOT be constrained by the master slide layouts, but do keep the fonts / colors / general branding. Do this work as a world-class designer of this type of content. I want something we can hang on a wall as art it’s so beautiful. Start by making a plan. Ask me any questions you want to ask. Then go to work.

Claude then followed an interesting process. First it looked at the template to understand the branding and the master slide layouts. Then it proposed a plan for the slide deck:

🎨 My Design Plan for the ALEX Deck

Brand DNA I’ve extracted:

Primary purple:

#4D3292(deep, rich CRA | Admired Leadership purple)Accent:

#D7DF23(electric lime/chartreuse — bold, modern)Support: Muted purple tones (

#595575), black, whiteThe PDF has a sophisticated, editorial feel — black header bar, full-bleed imagery, premium typography

My Duarte-inspired design approach: I’m going to create a 7-slide narrative arc that tells the ALEX story as a journey from problem → solution → proof — with bold, cinematic typography, generous white space, and dramatic visual contrast.

Slide Architecture:

Title — “ALEX: The AI Leadership Advisor” Full-bleed deep purple background with large, heroic type. Lime accent as a bold horizontal rule. Cinematic.

The Problem — “Great advice shouldn’t be a privilege” Stark white slide, massive pull-quote typography. A single provocative statement. Let it breathe.

What Makes ALEX Different — The Content + The Conversation Two-panel split design (purple/white). Two pillars side by side. Clean iconography.

The Gap — Where ALEX Lives Visual storytelling: the moment between challenge and decision. Dark, moody purple with lime accent. Editorial feel.

Security & Trust — “Designed for Candor” Clean, authoritative. Badge/shield-style layout with certifications. Light background, strong typography.

Trusted By — Client logos / names Elegant grid of enterprise names. Social proof, understated power.

Contact / CTA — “Schedule a Demonstration” Full purple with lime accent. Clear, confident call-to-action.

Fonts: I’ll use a premium pairing — a strong geometric sans for headers (Montserrat or the closest match to CRA’s branding) paired with a clean body font.

Shall I proceed? Or would you like to adjust the narrative structure, number of slides, or emphasis areas? Any specific client names you want highlighted or hidden?

I’m ready to build something extraordinary. Let me know if you want changes, or I’ll start creating!

We asked a few questions and then it was off to the races. As it worked, it created the slides one at a time, and then, in a surprise to us, engaged in its own quality assurance process. It reviewed the entire presentation for visual continuity, making changes to the slide master layouts as it did. Then it edited individual slides for more visual sophistication. Then it looked for and fixed any overlaps of text, graphics, and sections. We noticed an alignment issue on slide one, which we noted and Claude fixed. The result is below:

We don’t know that this blew us away, but it’s an impressive deck with decent taste. Certainly very good for a first attempt, and we know it would be better if we included a folder of photographic imagery for Claude to use. And it only took a few minutes to create.

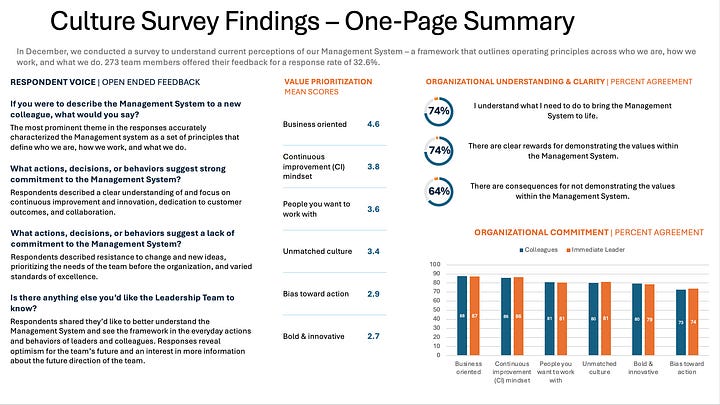

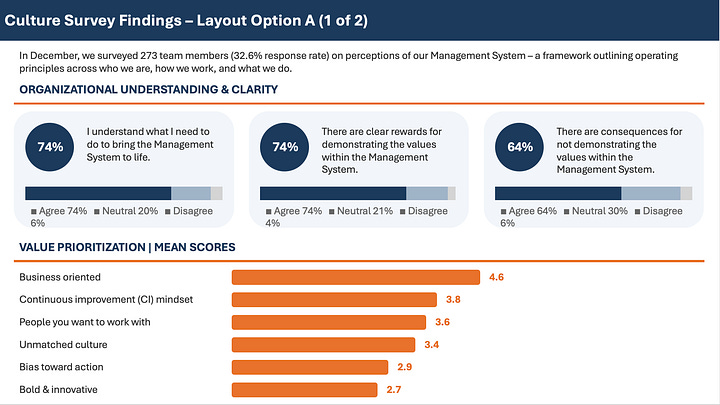

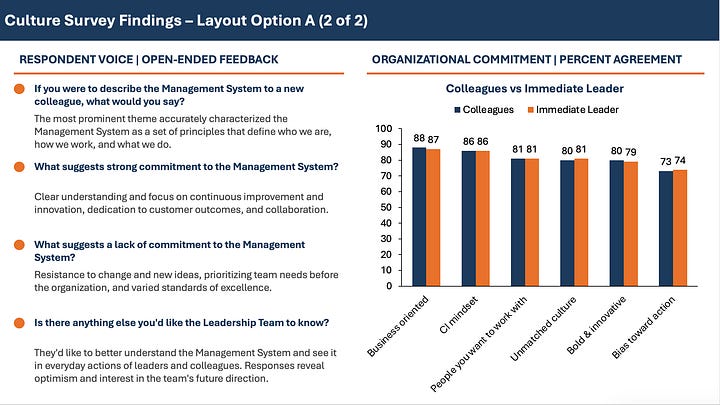

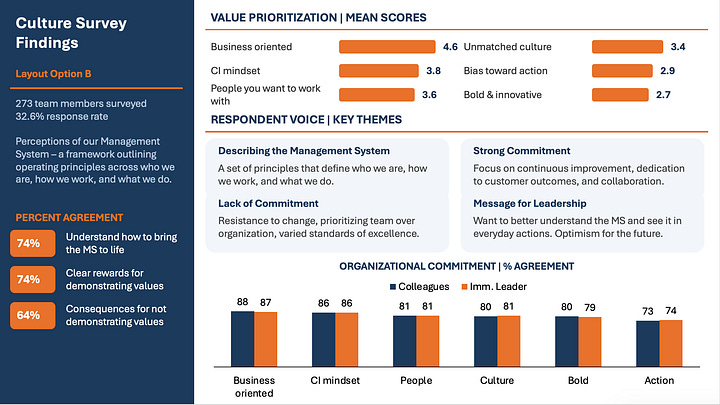

As a second example, a colleague asked Claude to “generate three alternative layout options of the first slide” in an existing one-slide presentation. That’s the only direction she provided. Below is the original slide, and the three alternatives Claude created:

This really impressed us, especially the continuity of the findings text and the data across all four slides. Again, we made no edits — this was Claude’s first attempt.

We’ve joked for some time that people in most organizations weren’t wondering when the human race would achieve AGI, but they were wondering when the human race would achieve PowerPoint AGI. As a first iteration, Claude in PowerPoint is very close, and for those who have it and do a lot of work in PowerPoint, their work lives will change in at least one significant way.

Tolerating Ambiguity and Working With AI

What can John Keats teach us about working more effectively with generative AI?

Over dinner this past week, two Confluence writers shared the observation that those who are more willing to tolerate ambiguity tend to get more out of working with generative AI. Being the nerds that we are, this led to a conversation about the 19th-century poet John Keats’s concept of “negative capability” — essentially, the ability to sit with uncertainty (more on that below) — and how it applies to working with generative AI. To explore this connection further, and to put Claude Opus 4.6 through its paces, we engaged Opus 4.6 in the following conversation over the weekend.

User: Can you explain the concept of “negative capability”?

Claude Opus 4.6: Negative capability is a term coined by John Keats in a letter to his brothers in December 1817. He described it as the quality “when a man is capable of being in uncertainties, mysteries, doubts, without any irritable reaching after fact and reason.”

Keats was talking about what makes great artists — particularly Shakespeare — exceptional. The idea is that the highest creative and intellectual minds can sit comfortably with ambiguity, contradiction, and the unknown rather than forcing premature resolution or neat explanations onto complex experiences. Shakespeare, in Keats’s view, could inhabit wildly different characters and perspectives without needing to resolve them into a single authorial viewpoint.

The concept has taken on a life well beyond literary criticism. It shows up in psychotherapy (Wilfred Bion drew on it heavily), leadership theory, and philosophy. In each case, the core insight is the same: there’s a kind of strength in resisting the urge to collapse complexity into simple answers. The “negative” isn’t about pessimism — it’s about the capacity to not act, not conclude, not reach for certainty when the situation doesn’t warrant it.

It’s a particularly resonant idea in a world that rewards decisiveness and quick takes. Keats was essentially arguing that the ability to dwell in uncertainty — to let something remain unresolved long enough to actually understand it — is a higher-order capability than having a ready answer for everything.

User: How do you think this applies to working with generative AI?

Claude Opus 4.6: This is a really rich connection, and I think it cuts in a few directions.

The most obvious one is that generative AI creates a massive temptation to do the opposite of negative capability. The technology is essentially an “irritable reaching after fact and reason” machine — it will always give you an answer, always resolve ambiguity, always produce something coherent-sounding. That speed and fluency can short-circuit the kind of productive uncertainty where the best thinking actually happens. If you’re a leader working through a complex stakeholder situation or a communicator trying to figure out the right narrative for a difficult transition, there’s real danger in letting AI collapse the problem too early.

But here’s where it gets more interesting: used well, AI can actually expand your capacity for negative capability. It can help you hold more possibilities open simultaneously. You can use it to pressure-test your assumptions, explore perspectives you hadn’t considered, or map out the full complexity of a situation before converging on a direction. The key distinction is whether you’re using AI to get to an answer faster or to understand the problem more deeply before you commit to an answer.

There’s also a meta-level version of this that matters a lot right now. We’re in a period of genuine uncertainty about what AI is, what it will become, and what it means for how people work and lead. The people who are navigating this moment best — in my observation — are the ones practicing negative capability about AI itself. They’re engaging seriously without either dismissing it or making breathless predictions. They’re experimenting and learning rather than locking in a fixed position.

The people who struggle most tend to fall into one of two traps: premature certainty that AI changes everything, or premature certainty that it changes nothing. Both are “irritable reaching after fact and reason” in Keats’s sense — attempts to resolve discomfort with ambiguity rather than staying in it long enough to develop real understanding.

For someone teaching others how to work with AI, I’d think the Keats framing is actually a pretty powerful pedagogical tool — it gives people permission to not have it all figured out yet, which is exactly where most organizations are.

User: I have a slightly different take. I think some sense of negative capability is necessary to work with generative AI effectively. These systems are not deterministic. There’s no “right way” to do anything with them. It often takes experimentation, creativity, and patience. You need to have a tolerance for their weirdness. In many ways it’s a similar capacity to what it takes to engage effectively with people. I’ve noticed that the people who get the most out of AI have this capacity. People who expect more certainty can tend to get more frustrated or dismissive.

Claude: That’s a better and more practical framing than mine. I went to the strategic level, but you’re pointing at something more fundamental about the basic interaction model.

You’re right that there’s a real parallel to interpersonal effectiveness. Working with a person — coaching them, collaborating with them, trying to get the best out of them — requires tolerance for the fact that they won’t always respond the way you expect. You have to read, adjust, try a different angle, stay curious about what’s happening rather than getting frustrated that it didn’t work the first time. The people who are great at that tend to be great with AI, and I think you’re identifying the underlying trait that explains why.

The determinism point is especially sharp. A lot of the frustration I’d imagine you see in training sessions comes from people approaching AI like software — expecting that if they push the right button, they get the right output. When it doesn’t work that way, they conclude the tool is broken rather than reconsidering their approach. That’s the absence of negative capability in action. They can’t sit with the ambiguity of “this didn’t work, but something here might if I come at it differently.”

And the flip side is that the people who naturally experiment — who treat an unexpected output as information rather than failure — tend to develop an intuitive feel for these systems much faster. They build a working mental model not through rules but through repeated, curious engagement. Which is basically how you build a working mental model of another person.

This could be a genuinely useful frame for your training work. It gives people a concrete way to understand why they might be struggling that doesn’t blame the technology or their intelligence — it reframes it as a learnable disposition. And it connects AI fluency to a capability most professionals already value in other contexts.

There’s a lot there to chew on. We found Opus 4.6’s responses interesting on two levels: how it responded and the content of its responses. As for the how, there’s definitely a whiff of sycophancy here (“That’s a really rich connection” … “That’s a better and more practical framing than mine”) that we did not see as much of with Opus 4.5. Second, Opus 4.6 seems so comfortable talking in the first person that at times it goes too far (“in my observation”), which we’ve seen with other models (most notably OpenAI’s o3 models) in the past. To Claude’s point in the exchange above, getting to know a new model is a bit like getting to know a person. Opus 4.6 is barely 72 hours old, so we’ll continue to get to know it in the coming days and encourage our readers to do the same.

The content of the responses shows the intelligence of the model, not to mention its breadth of knowledge. We came to the conversation with one sense of how a toleration of ambiguity leads to more productive engagement with generative AI. Opus 4.6’s perspective both sharpened our initial thinking and gave us more to think about. For example, we hadn’t considered the flip side of our observation: that AI itself is a powerful engine for collapsing ambiguity too early, and that the same tolerance for uncertainty that makes someone effective with AI can also help them resist that pull. Opus 4.6 also makes a sharp meta-level point: that we’re all navigating genuine uncertainty about what AI is and will become, and the people doing that best are the ones practicing negative capability about the technology itself by “engaging seriously without either dismissing it or making breathless predictions.”

By giving us more to think about — and more angles from which to explore our initial question — Opus 4.6 proved its own point: “used well, AI can actually expand your capacity for negative capability. It can help you hold more possibilities open simultaneously.”

What People Will Let AI Do

New research on the moral boundaries of automation.

Last week, we looked at a more nuanced picture of generative AI exposure across the labor market, examining what AI can technically do, which jobs it can affect, and how. Today, we’d like to focus on a different question: what will people let generative AI do?

A new write-up this week from Harvard Business School’s Working Knowledge highlights a working paper from October by HBS Assistant Professor James Riley and coauthor Simon Friis. The paper, “Performance or Principle: Resistance to Artificial Intelligence in the US Labor Market,” surveyed 2,357 Americans about 940 occupations. Researchers found that respondents support automating roughly 30 percent of jobs based on AI capabilities (current at the time of survey). When asked to imagine a future AI that outperforms humans at lower cost, that number nearly doubles. But even in that scenario, respondents remained ambivalent about 42 percent of occupations, with roughly 12 percent of all occupations, including clergy, childcare workers, funeral directors, athletes, and artists, viewed as essentially off-limits.

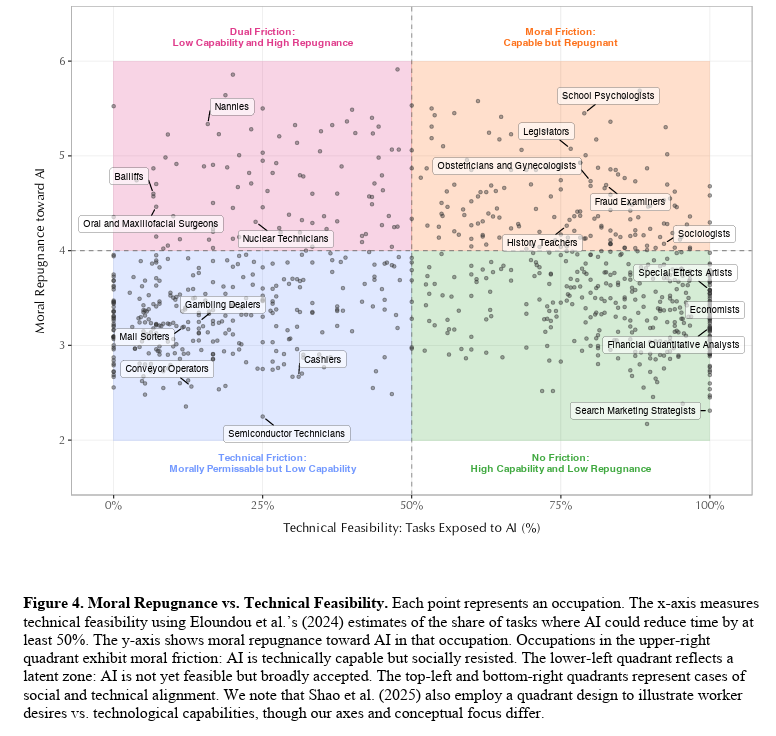

The authors draw a useful distinction between “performance-based resistance,” which is technical and fades as AI improves, and “principle-based resistance,” which is moral, and doesn’t. They point to previous examples like GMOs, nuclear power, and stem cell research, where public sentiment shaped adoption regardless of capability. Riley maps this tension across 940 occupations in the figure below, with technical feasibility on one axis and moral repugnance on the other. The upper-right quadrant is where it gets interesting: jobs AI could technically do, but that people don’t want it to.

The most practically useful finding may be that 94 percent of respondents support AI as a collaborative tool to augment human work. The appetite for AI that helps people do their jobs better is broad and durable, while the appetite for AI that replaces them is conditional, contested, and, in some cases, firmly off the table. Riley and Friis suggest that leaders, faced with this sentiment, exercise caution in rolling out AI across their organizations. The authors advise leaders to prioritize performance when evaluating AI opportunities, factor social norms into long-term planning, be transparent about AI use, and know the risks of crossing moral lines.

This is sound advice, but we’d add one thing. If principle-based resistance is real, as this study seems to indicate, then the language leaders use to describe AI initiatives matters more than ever. Framing AI deployment purely in terms of efficiency and cost savings risks triggering exactly the resistance the study describes. Employees hear “efficiency” and think “replacement.” The 94 percent augmentation number suggests a different frame is available — one that emphasizes what people can accomplish with these tools rather than what the tools can accomplish without people. The distinction between “AI will do this in your place” and “AI will help you do this better” may sound like semantics, but this research suggests it’s the difference between support and resistance.

We’ll leave you with something cool: Anthropic is airing its first Super Bowl campaign on Sunday, mocking the idea of ads in AI conversations. OpenAI’s Sam Altman called the ads “funny” but “clearly dishonest.” Judge for yourself.

AI Disclosure: We used generative AI in creating imagery for this post. We also used it selectively as a creator and summarizer of content and as an editor and proofreader.