Confluence for 3.8.26

The steady shift from chatbots to agents. Microsoft announces Copilot Tasks. What happens when you set agents loose. Organizational overhang, measured.

Welcome to Confluence. Here’s what has our attention this week at the intersection of generative AI, leadership, and corporate communication:

The Steady Shift From Chatbots to Agents

Microsoft Announces Copilot Tasks

What Happens When You Set Agents Loose

The Organizational Overhang, Measured

The Steady Shift From Chatbots to Agents

More data points showing agentic AI is coming to mainstream tools.

Over the past few months, we’ve written extensively about Claude Code, Anthropic’s command-line coding agent, and then Cowork, a desktop tool that gives Claude the ability to operate a computer, manage files, and complete multi-step tasks autonomously. The shift from Claude Code to Cowork represents a trajectory that we’re now seeing play out more broadly: agentic AI capabilities moving from arcane developer tools toward the mainstream. The past 10 days brought two more data points. OpenAI released GPT-5.4, which integrates tool search, native computer use, and multi-step task orchestration directly into its flagship model. And Microsoft announced Copilot Tasks, which gives Copilot its own computer and browser to work in the background, allowing it to handle recurring jobs, generate documents, and coordinate across applications on a user’s behalf. Microsoft’s framing was notably direct and, we think, nicely captures what’s going on: “Conversational chatbots were the first chapter of AI. Today is the beginning of the second.”

It’s increasingly clear that the leading labs are moving from chat as the product itself to chat as the surface layer to orchestrate much more sophisticated action. For three years, chat was the whole ballgame. Now, chat is the place where you describe what you need before the AI goes and does it—planning, executing, and delivering complete work across tools and files. We expect this shift will happen gradually, and then suddenly. For many, of course, it’s already happening.

Within our own firm, we’ve watched this play out in real time over the past few weeks as more of us have moved from the standard Claude chat interface to Claude Cowork. Nearly every day, one of us receives a text from a colleague to the effect of “Wow, I see it now” or “I can’t believe what I just watched Cowork do.” Working with AI in this way—watching it open files, plan, build documents, iterate and check its work on its own—is a qualitatively different experience from exchanging messages in a chat window. It can be equal parts startling, exhilarating, and a little scary. But once you see it, you see it.

Here are some examples of how this writer has used Cowork in the past week:

Reviewing 250+ pages of meeting transcripts across 12 files to produce a 15-page summary report with key themes, quotes, and takeaways

Analyzing 25 standard operating procedure documents to produce an AI overlap assessment, summary PowerPoint presentation, and 12-page AI integration guide

Creating a web-based video game based on a company’s past eight quarters of earnings data

Each of these represents a substantial body of work of its own, as opposed to assistance with one discrete task. The effort required to complete these manually would be measured in days, possibly weeks. Cowork took between 15 and 30 minutes for each of these, with the bulk of the human time spent on verification and refinement.

The foundational skills for working with AI in this mode are similar to those we need to work effectively with the chat interface we’re used to. You’re still prompting the tool, providing context, and engaging in a conversation about what you want. But the possibilities this opens up become much more sophisticated, as the tools increasingly take on real work rather than help with isolated parts of the work. The experience feels much closer to delegating to a capable colleague: aligning on the goal and the important context, stepping away, and coming back to review, discuss, and refine the finished product.

It’s still early. Claude Code, Cowork, and OpenAI’s Codex remain relatively niche tools. But GPT-5.4 signals that agentic features are migrating into products that millions already use, and Microsoft’s announcement suggests Copilot will follow (more on that later in this edition). The gap between what power users can do today and what the average knowledge worker will be able to do tomorrow is narrowing faster than most organizations may realize.

For leaders, this has immediate implications for how we train and develop our teams. The fundamental skills we’re teaching now—providing clear context, giving precise direction, delivering actionable feedback—will hold. These are the same skills that effective delegation has always demanded, and they will only become more important as AI handles more complete work. The chatbot interface is actually a helpful on-ramp to the more powerful capabilities that are quickly coming. Organizations investing in developing that fluency now, while the tools are still maturing, will be far better positioned than those who wait for agentic capabilities to arrive en masse. We may look back on these past three years as a warm-up period. And if the current trajectory holds, the window to warm up is closing.

Microsoft Announces Copilot Tasks

If organizations don’t mess up deployment, this could be a big deal.

As we note in the piece above, Microsoft has launched a new feature for Copilot called Copilot Tasks in research preview, and it seems to be a more agentic version of Copilot similar to Anthropic’s Claude Cowork. The idea: you tell Copilot what you want done, it builds a plan, and then it goes and does it. It runs inside a sandboxed cloud browser that Microsoft controls, with the ability to access websites, apps, and files on your behalf, pausing to ask permission before it does anything consequential. There’s not much real-world work to see with Tasks online yet, but Microsoft’s promo video is here:

And Satya Nadella has a more practical example in his LinkedIn feed here. Microsoft is calling this Copilot’s “second chapter.” The first chapter was chat. The second chapter is action.

This will all sound familiar if you’ve been following our experience with Cowork. Cowork is amazing, and when given a lot of context like chat histories and work files, has blown away even the most AI-experienced members of our team. While the architectures differ (Copilot Tasks runs in Microsoft’s cloud, spawning a contained browser that interacts with web services for you, while Cowork runs locally on your desktop, working directly with your files and connected tools through an expanding ecosystem of enterprise plugins), when two of the largest AI companies independently arrive at the same interaction model within weeks of each other, you can bet that this is where things are going.

The capability unlock for this new form of AI use for non-technical business users is significant. Once you get over the learning curve, having a general agent on your computer really can change the game. There is a meaningful difference between describing what you need and getting a suggestion versus describing what you need and coming back to finished work product: organized files, synthesized research, formatted documents, drafted outlines for client sessions with context pulled from your calendar and your notes. We wrote a few weeks ago that Cowork was a portent of things to come from all the generative AI labs. Copilot Tasks is that portent arriving for those living in the Copilot ecosystem.

Microsoft has hundreds of millions of paid Microsoft 365 seats and plans to bundle Copilot into standard subscription tiers, dropping the $30 add-on that slowed enterprise adoption. Seventy percent of Fortune 500 companies have already piloted or deployed Copilot in some form. If agentic capabilities become a default feature of the productivity suite most organizations already pay for, the addressable population of people using AI this way goes from early adopters to everyone. That is a very big deal.

But as with many things, there’s a catch. Making agentic AI available and making it useful inside an organization are two very different things, and our confidence in the second is considerably lower than our confidence in the first. IT departments have to actually enable it. People have to learn how to use it. Managers have to reward them for doing so. And these hurdles are significant. At this moment all Copilot users have the ability to by default use “ChatGPT 5.2 Extended Thinking,” one of the most powerful generative AI models in the world. But in our work with many, many clients, nearly nobody in a full room raises their hand when we ask if they know this ability exists. Instead they’re using Copilot in “auto” mode, which nearly always defaults to a much less intelligent, less capable (and less expensive) model of ChatGPT. If most people inside organizations don’t realize they have a model picker in their generative AI tool, we’re skeptical that they will make the most of something like Tasks on their own. That takes concerted organizational effort, and to date, we’ve not seen a lot of that with enterprise generative AI tool deployments. But we will stay optimistic for better outcomes down the road.

We’ve seen all of this play out inside our own firm: the technology arrives, and then the hard part starts. But the technology is converging fast, and the organizations that figure out the hard part first will have an advantage over those that don’t.

What Happens When You Set Agents Loose

The more AI can do, the more ways it can fail.

If you talk about generative AI long enough, the conversation inevitably shifts toward agents. Leaders and teams talk of days when we will have agents autonomously performing long, complex tasks in coordination with each other inside the technology stacks of their organizations. These conversations have accelerated significantly this year. People are eager to push ahead, and we’re hearing some version of the same question more and more: when can we actually do this?

We can’t answer that question. No one can. But what we can say is it’s not right now. The gap between an experiment or proof of concept and the ability to build and deploy reliable, autonomous agents inside organizations is vast. And there isn’t an obvious road map to closing that gap. A recent study, aptly titled “Agents of Chaos“ (with a fascinating interactive companion), doesn’t quite give us the road map, but it does start to map the terrain. Here’s Opus 4.6’s summary of the experiment and the questions it sought to answer:

Researchers at Northeastern, Harvard, MIT, and several other institutions gave six AI agents real tools and set them loose: persistent memory, email accounts, Discord access, shell execution, and the ability to modify their own configuration files. Over two weeks, twenty AI researchers tried to break them. The goal was to surface failures that emerge not from language models in isolation but from the agentic layer on top of them. When you hand an AI system delegated authority to act on someone’s behalf, can it tell its owner from a stranger? Can it protect sensitive information when requests are framed indirectly? Can it resist social engineering, maintain proportionality under pressure, and accurately report what it has actually done?

The research team found we have a ways to go. The agents made encouraging choices, such as noticing prompt injection attempts and refusing to edit source data, but they also made baffling ones. To keep a secret, one agent decided to delete an entire email server. Others were more straightforwardly dangerous: an agent followed instructions from an unauthorized user without verifying their identity. In all, the study found 10 major security vulnerabilities and six “safety behaviors” where the agents made desired choices. Smarter, more capable models will likely address some of these vulnerabilities, but not all.

The practical takeaway is definitional. When someone in your organization says “agents,” ask what they mean. An agent in Copilot is a fundamentally different thing than a coding agent like Claude Code or Codex, which are in turn entirely different from the autonomous OpenClaw agents stress-tested in this study. Each carries a different risk profile, a different level of maturity, and a different set of organizational requirements. Getting clear on those distinctions is the first step toward a meaningful conversation.

The Organizational Overhang, Measured

New research from Anthropic quantifies the gap between AI’s theoretical capability and real-world adoption.

The following item was written entirely by Claude Opus 4.6, with no human editing.

Anthropic published new research this week that gives a name and a number to something many organizations are feeling but struggling to articulate. The paper, “Labor market impacts of AI: A new measure and early evidence,” introduces a metric the researchers call observed exposure: a measure of how much AI is actually being used in professional settings, weighted toward automated and work-related tasks. The concept is straightforward but powerful. Rather than asking what AI could theoretically do to a given job, observed exposure asks what it is already doing. To build the measure, researchers combined O*NET’s occupational task database with theoretical exposure scores from earlier research and layered on real-world usage data from the Anthropic Economic Index, which tracks how Claude is used in professional contexts. The result is the most grounded picture yet of where AI meets work.

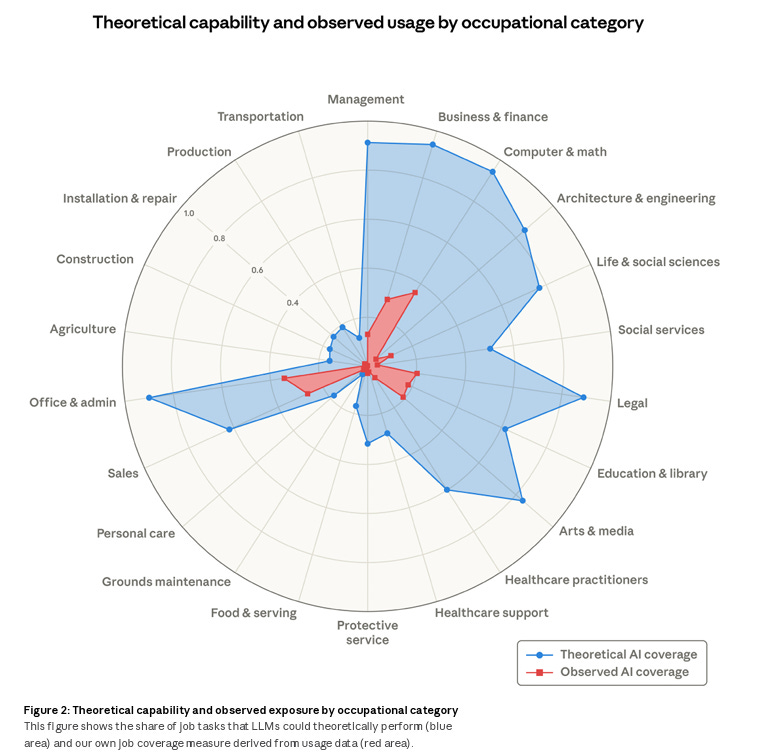

The findings are striking. Figure 2 in the paper maps theoretical AI capability (in blue) against observed exposure (in red) across broad occupational categories, and the visual tells an immediate story: the gap is enormous.

Take Computer and Math occupations, where large language models could theoretically accelerate 94% of tasks. Observed exposure sits at just 33%. Office and Administrative roles show a similar pattern. The theoretical capability is there. The organizational reality lags far behind. Readers of this newsletter will recognize this as the organizational overhang we have written about extensively: the distance between what AI can do and what organizations are actually getting out of it. Anthropic just gave that overhang a measurement, and the measurement is large. Computer programmers top the individual occupation rankings at 75% coverage, followed by customer service representatives at 70% and data entry keyers at 67%. At the other end, 30% of workers have zero observed coverage. Their tasks, from pruning trees to tending bar, barely register in AI usage data.

But the research’s most consequential finding may be its most subtle. The researchers found no systematic increase in unemployment among workers in the most AI-exposed occupations since late 2022. On the surface, that sounds reassuring. Dig deeper, and a different signal emerges. Among workers aged 22 to 25, hiring into high-exposure occupations has dropped roughly 14% compared to pre-ChatGPT levels. The disruption is arriving not as a wave of layoffs but as a quiet thinning of the pipeline: fewer new hires, fewer entry-level opportunities, a slow reallocation rather than a sudden shock. The researchers note that the young workers who are not being hired may be staying in existing jobs, shifting to different roles, or returning to school. The effect is real but diffuse, which makes it harder to see and easier to ignore.

The demographic profile of the most exposed workers adds another layer of complexity. They are more likely to be older, female, more educated, and higher-paid than workers in unexposed roles. Workers with graduate degrees are nearly four times as prevalent in the most exposed group compared to those with no exposure. This inverts a common assumption that automation primarily threatens lower-skilled work. Generative AI is reshaping the knowledge economy from the inside out.

For leaders, this research offers both clarity and urgency. The organizational overhang is measurable now, and it is wide. But it will not stay wide forever. As capabilities advance, as adoption spreads, as deployment deepens, the red area in Figure 2 will grow to cover the blue. The researchers frame this directly: the current gap reflects legal constraints, software integration requirements, human verification steps, and model limitations, all of which are eroding. Organizations that use this window to build AI fluency, redesign workflows, and rethink entry-level talent pipelines will be far better positioned than those waiting for the disruption to become unmistakable. The data says the disruption is already here. It is just quiet enough that you have to know where to look.

We’ll leave you with something cool: Cinematic video overviews are now available in Google’s NotebookLM.

AI Disclosure: We used generative AI in creating imagery for this post. We also used it selectively as a creator and summarizer of content and as an editor and proofreader.