Confluence for 5.8.26

Why you shouldn't have AI record your meetings. Listening to AI discuss your work. Rising tides and one crashing wave. Claude’s Visual Tells.

Welcome to Confluence. Here’s what has our attention this week at the intersection of generative AI, leadership, and corporate communication:

Why You Shouldn’t Have AI Record Your Meetings

Listening to AI Discuss Your Work

Rising Tides and One Crashing Wave

Claude’s Visual Tells

Why You Shouldn’t Have AI Record Your Meetings

At least, not unless you want altered dialogue to go with those meeting notes.

One of the early use cases for generative AI was the addition of AI note-taking agents to most online meeting platforms. Zoom, Teams, WebEx, and others now have agents that will record the dialogue, create a transcription, and summarize the transcript into meeting notes. A new host of personal technology devices also offer this ability: pins, pens, and other items that you can wear or hold with you, which will create a conversational transcript and then summarize the notes using generative AI (fed to the note-keeping app of your choice).

Like most people, we thought these were helpful when they were released and looked forward to using them. But we’ll let you in on a secret: we don’t use them, and we don’t think you should either. Why? Because we think they create a chilling effect on the dialogue, altering what people would have said and how they would have said it. And we want to favor quality of dialogue over quality of notes.

There are four reasons for this. One is the question of legal discovery. While the case law is new on this, there are already several rulings noting that information submitted to AI services can be open to legal discovery and that the use of an AI agent can waive privilege. If you’re recording a conversation with an AI agent, it’s probably legally on the record, perhaps even if you’re an attorney meeting with a client.

Second, large-language models can hallucinate, as we all know. While hallucination rates are getting much better, we often don’t know what underlying model is running the transcription and how prone to hallucination it may be. The simple change of a contraction from “does” to “doesn’t” can dramatically alter the meaning of a conversation or decision. We prefer not to leave that to chance unless we can verify and validate the summarized notes against the originals.

Third is context. Meaning is contextually situated, and the AI agent, when reading a transcription, is unable to read vocalics, body position, or even necessarily appropriately understand the surrounding conversational context in which something is said. The ability to take something out of context in that process, we think, is relatively significant, and it’s something that humans participating in the meeting and taking their own notes are far less likely to do.

Fourth and perhaps most important is candor and openness. Healthy relationships and healthy teams depend on a high level of openness to have effective conversations. This willingness to take conversational risk is also called psychological safety, and all strong relationships and teams have a healthy dose of it. People need to feel that they can say the controversial thing without concern that others will misinterpret or punish them for doing so.

While the specific literature on the chilling effects of meeting transcription on conversation is emergent, we think it’s pretty clear that the act of recording a conversation reduces the level of psychological safety that people feel within it. Even though when in a non-recorded meeting everyone knows others are taking notes, the fact that those notes aren’t mechanically recorded, placed on a specific record, filed, and then available to others later changes the way people think and feel about that conversation. Simply put, we think it alters the dialogue in ways not beneficial to healthy team function.1

Many of the online meeting platforms with AI agents create AI transcription by default. We have that turned off, and we don’t turn it on unless we decide the particular conversation, in agreement with all parties, would be better recorded and transcribed than not. Further, when we convene a meeting and we see an AI note-taking agent in the meeting room, we dispatch it or ask the person who created it if they would dispatch it.

Quality dialogue is difficult enough in a world where people are meeting virtually, often more times than not. It’s critically important to the effectiveness of teams, relationships, and leadership. We should all want people to have as much candor and openness in conversation as we can muster, without concern that something has been taken out of context or placed in a permanent digital record. Our advice: turn off the agents except when you need to turn them on.

Listening to AI Discuss Your Work

A surprising thing we’ve learned from the Confluence podcast.

Last August, we began using NotebookLM to produce the Confluence podcast for two primary reasons. First, to provide another way for our subscribers to engage with the content. Second, to serve as a kind of real-time benchmark of audio generation capabilities. Nearly a year into producing it, we’ve come to appreciate a third benefit we did not initially anticipate: it gives us, as the writers of Confluence, the chance to hear two increasingly intelligent AI personas discuss our writing. The experience was uncanny at first, and often still is. But it’s become a part of the weekly Confluence rhythm that this writer, at least, has come to look forward to.

We’ve talked about the value of using AI to critique your work from the perspective of different audiences for as long as we’ve been using the technology. Hearing AI discuss your work is a different experience. Something about it is more visceral and more realistic. If reading AI feedback on a draft can feel like getting marked-up homework back (which is valuable), hearing AI discuss it feels more like overhearing two people talking about your work in the next room. The format is closer to how ideas actually travel and how ideas land (or don’t). Members of your audience read something, they riff on it, and they talk about what stuck and what didn’t. Listening to AI discuss your work replicates the distance inherent in that experience. It positions you as a fly on the wall, hearing how your ideas register with someone else.

The distance allows you to quickly sense whether your work landed as intended. If the AI misses your point, your audience probably will, too. But as the models have gotten more intelligent, we’ve also come to appreciate how often the AI will articulate an idea more clearly than we did. It’s not uncommon for us to listen to the Confluence podcast and find the hosts using metaphors or framing concepts in ways we wish we would’ve done ourselves.

NotebookLM remains the best tool we’ve found for this, but the capability is diffusing. Copilot Notebooks offer something similar, and some of our clients have begun using it to create audio versions of their own content. If you haven’t yet heard AI discuss your work, we’d encourage you to try it on a working draft or something you can still edit. You may find that you and your work are better for having heard AI discuss your work, not just share its thoughts with you in writing. We look forward to hearing our AI hosts discussing this one.

Rising Tides and One Crashing Wave

New revenue data and new research tell two very different stories about AI’s reach.

Anthropic’s annualized revenue run-rate passed $30 billion in April, up from $9 billion at the end of 2025. The Atlantic reported last week that this growth is without modern precedent: Anthropic is growing faster than Zoom during the COVID-19 pandemic, faster than Google in the early 2000s, faster even than Standard Oil during the Gilded Age. More than 1,000 businesses are each spending over $1 million annually on Claude, a figure that doubled in under two months. The Atlantic attributes the inflection largely to coding agents, specifically Claude Code and its competitors like OpenAI’s Codex, with Ethan Mollick calling the shift “a step change.” Back in January, we wrote that Claude Code represented the near-term future of how people would use generative AI, and that the capabilities it unlocked would eventually reach mainstream tools. The revenue data now backs that up in dramatic fashion. Coding assistance is the clearest commercial success story in generative AI, and it is pulling away from everything else.

Meanwhile, a new study from the MIT FutureTech team offers a wider-angle view. Researchers evaluated AI capabilities across more than 3,000 tasks drawn from the Department of Labor’s O*NET classification, collecting over 17,000 assessments from workers in those jobs. Their central finding: AI automation can be thought of as “rising tides,” broad and steady improvement across a wide range of tasks, rather than “crashing waves” that disrupt a few occupations suddenly. In the second quarter of 2024, AI models completed tasks that typically take humans three to four hours at about a 50% success rate. By the third quarter of 2025, that figure had climbed to roughly 65%. If current trends hold, the researchers project 80% to 95% success rates on most text-based work by 2029, at what they call “minimally sufficient” quality. The authors are careful to note that this projection assumes continued improvement at the pace of the past two years, and that there are reasons it could slow.

The capability improvement is happening everywhere, and yet commercial traction is concentrated in one domain. Developers have tight feedback loops, version control, testing infrastructure, and a culture of iteration that gave AI tools natural scaffolding to deliver measurable value. The revenue has followed. The rest of the enterprise is working with tools that are increasingly capable, as the MIT data confirms, but without equivalent scaffolding. The organizational overhang we have been documenting all year is widening in a specific way: the functions where AI already works are accelerating, while the functions where it could work are still sorting out the basics.

The MIT study’s “rising tides” finding should sharpen the urgency. If AI capability were concentrated in a few occupations, leaders could treat this as a technical transformation affecting certain teams. Broad-based improvement across thousands of tasks means every function is on the curve, whether it has the scaffolding or not. We wrote recently that the hardest part of building an agent is organizational design: deciding who has authority to do what, what “done” looks like, and which workflows are worth automating. That principle applies at the enterprise level. The revenue will follow wherever organizations build the structures that allow these tools to deliver consistent, reviewable, measurable work. The question is whether leaders are building those structures deliberately or assuming the gap will close on its own. So far, the evidence says it won’t.

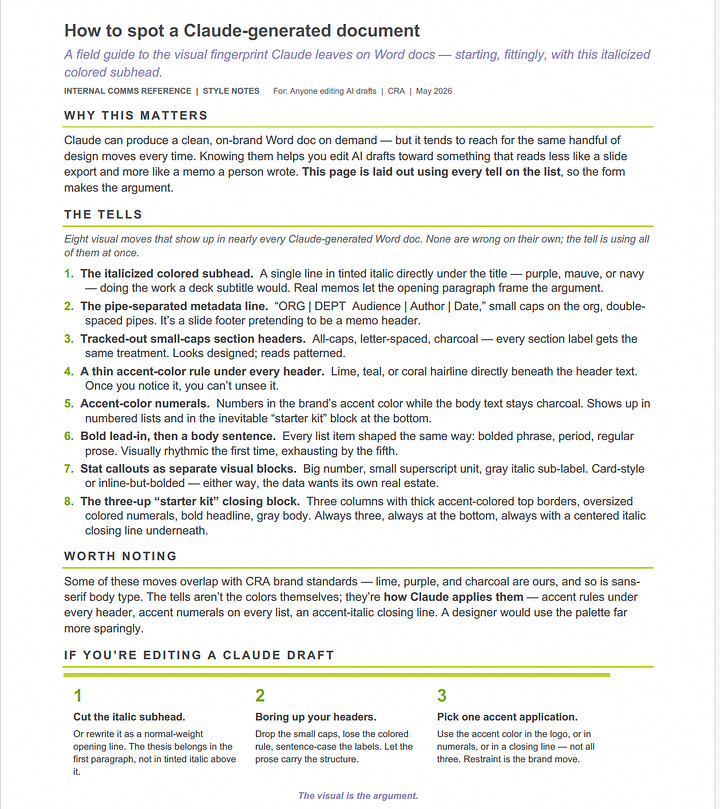

Claude’s Visual Tells

Frontier models like Claude are developing visual signatures as recognizable as their verbal ones.

By now, most of us can name a handful of words and phrases characteristic of AI-generated writing. The “it’s not [X], it’s [Y]” sentence structure is probably the most famous, along with words like “delve” (which we’ve written about before) and the overuse of em dashes (though plenty of us humans are still guilty of that one).

Those are the classics, but new ones surface with every model release. We’ve noticed Claude Opus 4.7 has a fondness for the word “move” and structural metaphors, describing ideas as “load bearing” or deserving more “weight.” ChatGPT loves to talk about goblins and gremlins, so much so that OpenAI recently published an internal investigation into “where the goblins came from.”

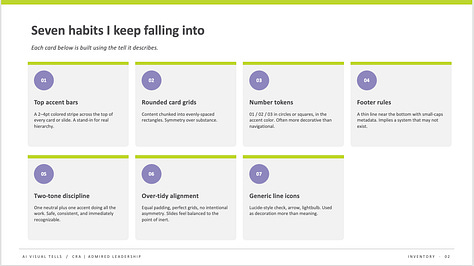

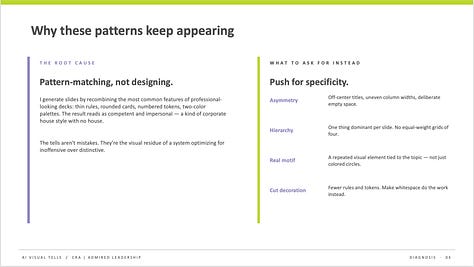

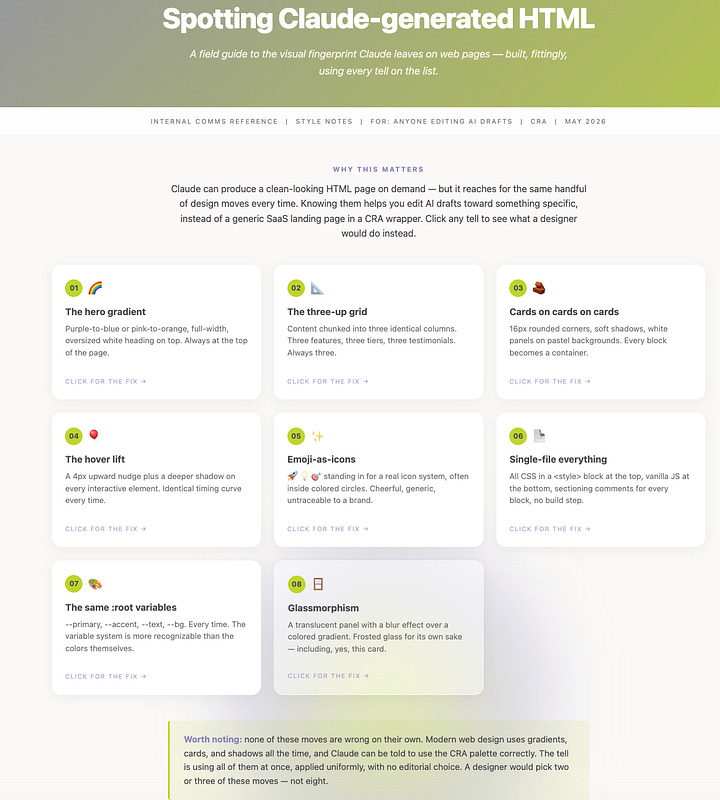

Now that the frontier models, Claude especially, are getting better at creating documents and presentations, we’re noticing similar telltale patterns emerging in AI-generated visuals and design. In PowerPoint and HTML files, for example, Claude almost always adds a thin, colored bar across the top or bottom of a slide, text card, or section header. It also tends to use a two- or three-color palette, defaults to small body text paired with outsized numbered lists, and writes declarative, period-heavy slide titles. Claude-generated Word documents have a similar look, but often with italicized colored subheadings, the author’s name and date listed up top, and thin colored borders under section headers. In general, we’ve noticed that LLMs use more color in Word documents than humans usually do.

We had Claude generate the examples below by asking it to identify its own visual trademarks and design a PowerPoint, HTML, and Word document accordingly.

Not all of these choices, individually, scream “Claude.” But together they create a certain visual aesthetic that does. Research shows that people get better at sniffing out AI-generated language the more they use LLMs in their own work. Our guess is that the same will hold true for visual language: as using AI to design presentations becomes even more common, we suspect these stylistic choices will become the visual equivalent of “it’s not [X], it’s [Y]”—dead giveaways for AI-generated work.

We’ll leave you with something cool: OpenAI released new voice models with GPT-5-class reasoning capabilities.

AI Disclosure: We used generative AI in creating imagery for this post. We also used it selectively as a creator and summarizer of content and as an editor and proofreader.The literature on psychological safety with AI transcription is emergent, but there’s an early study here. And this paper has a nice reference list of studies covering these topics and more.