Confluence for 3.31.24

The Trough of Disillusionment. Pew survey on ChatGPT use. OpenAI previews Voice Engine. Erik Brynjolfsson on AI, jobs, and inequality. Adobe launches brand content development tool.

Good Sunday morning (at least here in the United States). Spring has arrived in much of this part of the world, and spring flowers with it as today’s beautiful Midjourney-generated image suggests. Here’s what has our attention this week at the intersection of generative AI and corporate communication:

The Trough of Disillusionment

Pew Survey on ChatGPT Use

OpenAI Previews Voice Engine

Erik Brynjolfsson on AI, Jobs, and Inequality

Adobe Launches Brand Content Development Tool

The Trough of Disillusionment

Expect talk of bust to follow the hype — but don’t believe it.

We have already started to notice a turn in the conversation about generative AI — one that leans more skeptical than optimistic. Axios has noticed the same turn, and reports it here in AI Chatbot Letdown: Hype Hits Rocky Reality, suggesting that the technology is entering what Gartner’s hype cycle model1 calls the “trough of disillusionment.” The gist: after enjoying a period of inflated expectations, the limitations and challenges of generative AI are becoming more apparent, leading to a more sober assessment of its current capabilities.

The Axios article outlines several hurdles facing the technology, from embarrassing errors in generated content to concerns about intellectual property infringement, cost, and environmental impact. The article quotes AI ethics expert Rumman Chowdhury, who argues that generative AI remains a “party trick” unless significant work is done to address issues like bias and the inability to discern information from training data. The piece also acknowledges, though, that this trough is a common phase for new technologies and that many in the industry remain optimistic about generative AI’s future potential.

We, too, are observing this trough of disillusionment, and we’re seeing it show up in two ways. One is people expressing skepticism about generative AI without having spent significant time using the technology themselves. This is skepticism from the sidelines. The second is people who have experimented with these tools but found them lacking in practical utility, leading them to disregard the technology altogether. This is the “party trick” group, echoing Chowdhury’s sentiments.

What we notice, though, is that we don’t see the same level of disillusionment among the those who have spent more than 10 or 20 hours using generative AI. One could argue that this is a self-fulfilling prophecy of belief reinforcement, but we don’t believe so (even though we, admittedly, count ourselves in this group). It’s our experience that this continued enthusiasm stems from the strong likelihood that the more one engages with these tools, the more ways they discover to use them in ways that create tangible benefits at work and in their personal lives. These people find all sorts of innovative applications for generative AI that go beyond the surface-level party tricks, and yet fit into their organization’s security and policy schemes (which means, in many cases, that they are using the technology via personal accounts on personal devices), unlocking genuine productivity gains and creative possibilities.

If anything, these folks are the most excited about what the future holds for generative AI. They recognize that the technology is still in its early stages, that many current limitations are likely not insurmountable, that policy and security schemes will evolve as the technology becomes more ubiquitous, and that these tools will only become more capable in more interesting and useful ways. We have already written that our own experience with Claude 3 Opus signaled, for us, an important evolution in the utility of generative AI, and as the Axios article notes, OpenAI CEO Sam Altman has hinted that the next iteration of the company’s language model, GPT-5, will offer significant improvements in general reasoning compared to its predecessors. For those who have already witnessed the potential of generative AI firsthand, these promises of further advancements are a source of anticipation.

The term “trough of disillusionment” may be borrowed from the world of tech, but we’re reminded of another concept from the industry that we find relevant: the notion that people tend to overestimate the impact of a technology’s first wave but underestimate the second. It’s our view that this applies to generative AI. Further, given the rapid pace of technological evolution in this space, we think the current trough will be short-lived, and that the primary obstacles to widespread adoption within organizations will have less to do with the day-to-day utility of the tools themselves and more to do with the development of robust policies, governance structures, and security measures to ensure their responsible and effective use.

New Pew Survey on ChatGPT Use

Among U.S. adults, ChatGPT use — especially for work — is trending upward.

While the Axios article we discuss above focuses on “grumbles about generative AI’s shortcomings,” Pew released a survey report this week showing that Americans’ use of ChatGPT continues to increase. Although the use of ChatGPT doesn’t capture the full picture of the use of generative AI, it serves as a good proxy for us to pay attention to the data. Those data show that among adults in the U.S., use of ChatGPT is trending up across age groups, education levels, and various use cases (work, learning, and entertainment). Some highlights:

As of February 2024, 23% of U.S. adults say they have used ChatGPT, up from 18% in July 2023.

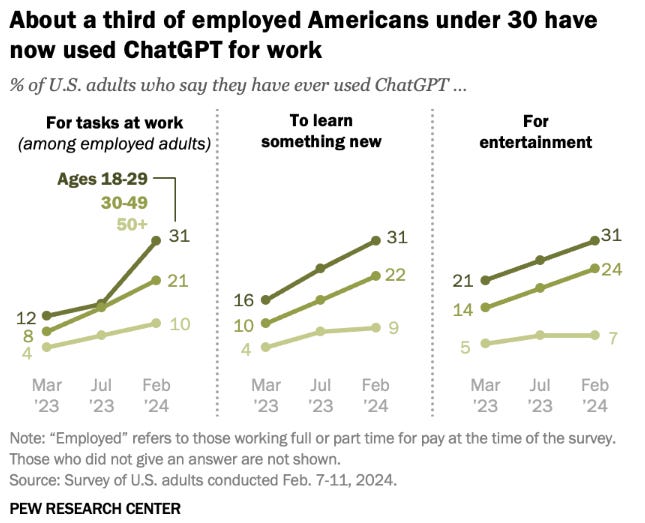

Younger adults are more likely to have used ChatGPT, with 43% of adults aged 18-29 and 27% of adults aged 30-49 reporting having used ChatGPT.

Adults with higher levels of education are more likely to have used ChatGPT, with 37% of adults with a postgraduate or advanced degree and 29% with a bachelor’s degree reporting use.

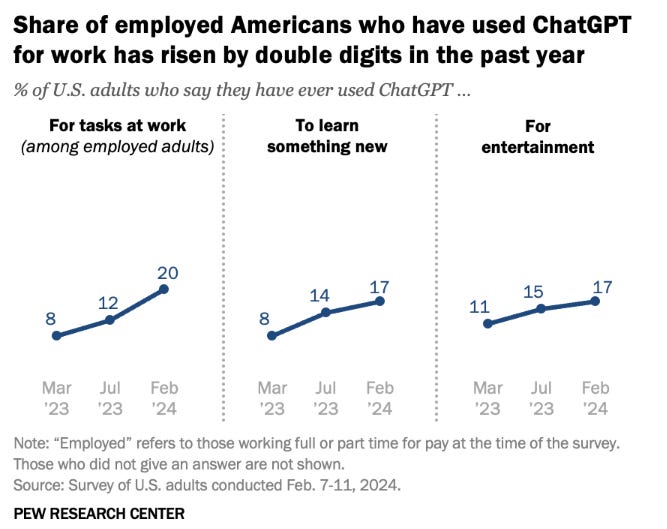

More American adults are using ChatGPT at work. 20% of U.S. adults report having used ChatGPT for a task at work, up from 8% a year ago and 12% in July. The age group trends noted above hold here as well, with 31% of adults aged 18-29 and 21% of adults aged 30-49 reporting using ChatGPT for work.

The data on ChatGPT use at work is particularly interesting, especially when compared to other uses in the charts below:

All curves point upward but notice the difference in shape. Although the time series only includes three points so far, the data shows that the use of ChatGPT for work is increasing at a greater rate than for other uses. Mathematically speaking, the summer of 2023 appears to have been a literal inflection point.

It’s important to note that this study focuses solely on ChatGPT, which is the most widely used generative AI tool but not the only one. Given this, it’s likely that the study underrepresents the actual use of generative AI overall, including for work. We’ll also note that using ChatGPT — especially for work — still involves some friction: users need to create accounts, toggle between windows and applications, copy and paste text into and out of ChatGPT, cleanse data (assuming responsible use), and more. We continue to expect generative AI to be increasingly integrated into the applications and workflows that employees already use, which will reduce that friction and drive usage even further.

The data clearly show where this trend is heading. The question for our readers is: Are you proactively positioning your team and your organization to embrace and keep pace with these changes?

OpenAI Previews Voice Engine

We are not far from producing natural sounding speech from only 15-second reference samples.

This week, OpenAI introduced Voice Engine, a new model generating speech that closely resembles the original speaker's voice with just a 15-second sample. We’ve covered voice generation in previous editions of Confluence, and while the functionality OpenAI shows with Voice Engine isn’t unexpected (though the quality is impressive) the use cases OpenAI highlights are worth our attention. These include translating and creating new audio in various languages while retaining the original voice, developing tools for community health workers, and aiding those who’ve lost their voice, among others.

Critically, these are not use cases that OpenAI designed — they shared Voice Engine with select partners and let them experiment and discover the types of problems that this model could solve for them. The results are impressive, and we believe it’s a reasonable assumption that these partners have found specific use cases for Voice Engine that the team at OpenAI did not predict.

As tools like Voice Engine become publicly available, we must identify the best ways to use them. To start building our understanding of how Voice Engine could help corporate communication professionals, we asked ChatGPT for advice. Its answer:

Based on the information from the OpenAI webpage on their new Voice Engine, here are ten potential applications for corporate communication professionals:

Personalized Customer Support: Create virtual assistants with natural-sounding, emotive voices for more personalized and engaging customer support experiences.

Multilingual Content Creation: Use the Voice Engine for seamless translation of corporate communications, preserving the speaker's accent across multiple languages, thus maintaining authenticity while reaching a global audience.

Training and Development: Develop interactive training programs with natural-sounding voices in multiple languages, tailored to different roles within the organization.

Accessibility Enhancements: Offer reading assistance and content accessibility for employees and customers who are visually impaired or have reading difficulties.

Corporate Announcements and Updates: Disseminate information using the voices of company leaders, making corporate communications more personal and engaging.

Voice Cloning for Busy Executives: Clone the voices of executives for use in internal or external communications when they are unavailable to record messages themselves.

Branding with Unique Voices: Create unique, brand-specific voices for use in marketing and advertising, enhancing brand recognition and emotional connection with the audience.

Emergency Communications: Utilize the Voice Engine to quickly produce vital emergency messages in multiple languages, ensuring clarity and reducing response times during crises.

Voice-based Surveys and Feedback: Implement engaging, voice-driven surveys for internal feedback or market research, making the process more interactive and less tedious.

E-learning and Onboarding: Enhance e-learning modules and onboarding experiences with realistic, varied voices, making educational content more engaging and easier to understand for new hires.

These applications leverage the potential of synthetic voices to make corporate communications more personalized, accessible, and engaging across various contexts.

This is a solid, if general, start. We then asked ChatGPT to highlight five risks related to Voice Engine for corporate communication professionals:

When considering the use of Voice Engine technology, corporate communication professionals should be mindful of nuanced risks including:

Authenticity Dilemma: Overreliance on synthetic voices might dilute personal connections between leaders and their teams, impacting perceived authenticity.

Voice Bias: Unintentional biases in voice design could reinforce stereotypes or exclude certain groups, affecting brand perception and inclusivity.

Overpersonalization: Excessive customization might invade privacy or create discomfort among audiences, leading to negative experiences.

Dependency and Loss of Skills: Relying on automated voices for communication can erode traditional communication skills among employees.

Misrepresentation Risks: Unauthorized use of someone’s voice could lead to misrepresentation or fraud, damaging reputations and trust.

These risks highlight the importance of ethical considerations and balance in deploying synthetic voice technologies.

Again, we wouldn’t presume this list to be exhaustive, but it gets us thinking about the risks of such a technology in ways we may not have previously.

With OpenAI and others working on models for audio and video generation, corporate communication professionals need to stay ahead and be ready to make the most of these technologies as they become available. There will not be an instruction manual on how to use these tools for corporate communications. We have to build it ourselves.

Erik Brynjolfsson on AI, Jobs, and Inequality

The economist talks with The Atlantic about navigating the economic impact of the rapidly advancing technology.

One of the leading voices in the discourse surrounding the economic impact of artificial intelligence is Erik Brynjolfsson, a professor and senior fellow at the Stanford Institute for Human-Centered AI and the director of the Stanford Digital Economy Lab. His book “The Second Machine Age,”2 co-authored with Andrew McAfee, explores how digital technologies are transforming the economy and society. It argues that we are entering a second era of profound technological change, and that navigating this transition will require a deep understanding of how technology interacts with labor markets, skills, and the distribution of wealth. We think it’s worth reading.

The Atlantic, which in our view has been doing an excellent job covering generative AI, recently published an interview with Brynjolfsson that probes his views on the impact of generative AI on jobs and income inequality. We’ve been advising our clients for well over a year now to pay attention to the larger technical and economic conversation about generative AI, and in that spirit, we think the interview is worth reading. The conversation, conducted by Nicholas Thompson, touches on several key mechanisms through which previous waves of technological advancement have had economic consequences, including skill-biased technical change, shifts in the balance between capital and labor, and the outsized rewards accrued by “superstars” in various fields.

When it comes to the potential impact of AI, Brynjolfsson offers a nuanced perspective. He points to some early evidence suggesting that AI could help reduce inequality in certain contexts, such as a study he conducted on the introduction of a large language model in a call center. In that case, the AI tool disproportionately benefited less-skilled workers by effectively transferring the tacit knowledge of more experienced employees. Brynjolfsson also, though, acknowledges the possibility that AI could accelerate trends toward greater concentration of wealth and power, particularly if organizations use it primarily to substitute for human labor rather than complement it. He emphasizes the importance of consciously steering the development and deployment of AI toward creating tools that augment human capabilities rather than replace them outright.

Ultimately, Brynjolfsson’s insights underscore both the profound challenges and the exciting opportunities that lie ahead as we navigate this new era of technological change. While The Atlantic and folks like Brynjolfsson may not be on your regular list of sources about this technology, they deserve to be. The more general context you have about what things mean and where they’re going, the better able you’ll be to navigate whatever is about to come next.

Adobe Launches Brand Content Development Tool

GenStudio is probably a harbinger of the future in more ways than one.

Adobe’s has announced GenStudio, an application that marks a significant step forward in integrating generative AI into traditional marketing and creative workflows. The tool aims to provide a comprehensive solution for marketers to create, manage, and measure the performance of personalized content across various channels, with a strong emphasis on brand safety and adherence to brand guidelines. It aims to solve the problem of, “How do we use generative AI to create, manage, and track all sorts of content, using existing brand assets, in a brand-consistent way?”

It’s a good example of the growing trend of generative AI being woven into conventional communication and creative processes. While much of the current debate surrounding generative AI within organizations revolves around deployment questions — such as which tools to allow, which to build, and when and how to implement the technology — we think GenStudio (and Microsoft’s Copilot for 365) highlight a likely future: instead of organizations grappling with these decisions independently, providers are increasingly going to integrate AI capabilities directly into the tools that businesses already rely on. This will, in many cases, effectively render the question of whether to allow employees to use generative AI moot, as it becomes an inherent part of their existing workflows.

Will organizations, which already use Adobe tools, decide to not use those tools because of restrictive generative AI policies? Will they choose to instead build their own? In the long run, we don’t think so in either case. We think it’s more likely that the integration of generative AI at “the edge” via applications organizations like Office 365 and Adobe GenStudio will be a forcing mechanism for the adoption of generative AI inside organizations. And as professionals begin to use these AI-powered features within familiar tools, we expect their desire to use generative AI in less tool-specific contexts, through platforms like ChatGPT, Claude, and Gemini, will only increase.

We’ll leave you with something cool: How a range of visual artists are using OpenAI’s text-to-video model, Sora.

AI Disclosure: We used generative AI in creating imagery for this post. We also used it selectively as a creator and summarizer of content and as an editor and proofreader.Gartner’s page on their Hype Cycle is here. Here is Claude 3 Opus’ take on its strengths and weaknesses:

Gartner's Hype Cycle is a graphical representation of the maturity, adoption, and social application of specific technologies. The methodology aims to provide a snapshot of the relative maturity of technologies and their potential relevance to solving real business problems and exploiting new opportunities. The Hype Cycle consists of five key phases:

Innovation Trigger: A potential technology breakthrough kicks things off, generating significant press and interest.

Peak of Inflated Expectations: Early publicity produces a number of success stories, often accompanied by scores of failures.

Trough of Disillusionment: Interest wanes as experiments and implementations fail to deliver. Producers of the technology shake out or fail.

Slope of Enlightenment: More instances of how the technology can benefit the enterprise start to crystallize and become more widely understood.

Plateau of Productivity: Mainstream adoption starts to take off and the technology's broad market applicability and relevance are clearly paying off.

Strengths: Gartner's Hype Cycle is a widely recognized and influential research methodology that provides valuable insights into technology trends. Its key strengths include:

Clear and concise representation: The Hype Cycle provides an easily understandable snapshot of the relative maturity and adoption of technologies.

Comprehensive coverage: Gartner produces numerous Hype Cycles covering a wide range of industries and technology domains.

Informed decision-making: The Hype Cycle helps organizations make informed decisions about when to invest in or adopt a particular technology.

Structured framework: The five key phases of the Hype Cycle offer a structured framework for understanding the typical progression of a technology.

Identification of opportunities and risks: The Hype Cycle can help organizations identify emerging technologies that may offer significant opportunities, as well as potential risks associated with adoption.

Facilitates communication and strategic thinking: The Hype Cycle provides a common language for discussing technology trends and encourages organizations to think strategically about technology adoption and investment.

Widely recognized and influential: As a well-established methodology, the Hype Cycle has significant influence on technology decision-making and can help organizations align their strategies with industry trends.

Critique: While Gartner's Hype Cycle is a widely recognized and influential tool for understanding technology trends, it has several limitations:

Oversimplification: The Hype Cycle reduces complex technological, social, and economic factors into a simple curve, which may not accurately reflect the nuances of each technology's development and adoption.

Lack of quantitative data: The Hype Cycle is primarily based on qualitative analysis and expert opinion, rather than quantitative data. This makes it difficult to compare technologies or make precise predictions about their future trajectories.

Bias towards hype: By focusing on the "hype" surrounding new technologies, the methodology may overemphasize the importance of trendy or heavily promoted technologies while overlooking less glamorous but potentially more impactful ones.

Limited scope: The Hype Cycle only covers a select number of technologies and may not provide a comprehensive view of the entire technology landscape.

Short-term focus: The methodology is primarily concerned with the short-term adoption and maturity of technologies, and may not adequately consider their long-term implications or potential for disruptive change.

Lack of industry-specific insights: While Gartner does provide some industry-specific Hype Cycles, the general methodology does not account for the unique factors that shape technology adoption in different sectors.

Self-fulfilling prophecy: The high visibility and influence of the Hype Cycle may actually shape the perception and adoption of technologies, potentially creating a feedback loop that reinforces its own predictions.

Despite these limitations, Gartner's Hype Cycle remains a valuable tool for understanding technology trends and guiding investment and adoption decisions. However, it should be used in conjunction with other forms of analysis and should not be relied upon as the sole source of insight into the complex and ever-evolving world of technology.

Claude 3 Opus’ critique of the book:

"The Second Machine Age" by Erik Brynjolfsson and Andrew McAfee is a thought-provoking book that explores the impact of digital technologies on the economy, society, and the future of work. The authors argue that we are entering a new era of technological progress, driven by advances in artificial intelligence, robotics, and digital networks, which will fundamentally transform the way we live and work. While the book presents a compelling vision of the future, there are some areas where the critique could be more nuanced.

Strengths:

1. The authors provide a clear and accessible overview of the key technological trends driving the second machine age, such as Moore's Law, digitization, and the increasing power of artificial intelligence.

2. They present a balanced view of the potential benefits and challenges of these technologies, acknowledging both the opportunities for economic growth and the risks of job displacement and rising inequality.

3. The book includes numerous real-world examples and case studies that illustrate the impact of digital technologies on various industries and sectors, making the arguments more concrete and relatable.

Weaknesses:

1. The authors may overstate the speed and extent of technological change in some areas. While the pace of innovation is indeed rapid, the adoption and diffusion of new technologies can be slower and more uneven than suggested in the book.

2. The book could have provided a more in-depth analysis of the social and political implications of the second machine age. While the authors touch on issues such as income inequality and the need for education and training, these discussions could have been more extensive and nuanced.

3. The authors' policy recommendations, such as investing in education and infrastructure, are somewhat general and lack specificity. A more detailed exploration of potential policy solutions would have strengthened the book's argument.

4. The book may underestimate the resilience and adaptability of human labor in the face of technological change. While some jobs will undoubtedly be automated, history shows that new jobs and industries often emerge in response to technological shifts.

Overall, "The Second Machine Age" is a valuable contribution to the ongoing debate about the impact of digital technologies on the economy and society. While the book has some limitations, it provides a thought-provoking and accessible introduction to the key issues and challenges we face in the era of rapid technological change.

We are using it to record voice overs in eLearning using scripts instead of using our own voices. Easier to fix and repair in future. The new voice engine may help us fix and repair older courses where the person who did the original recording is no longer working for us. Although we may just redesign these courses altogether.